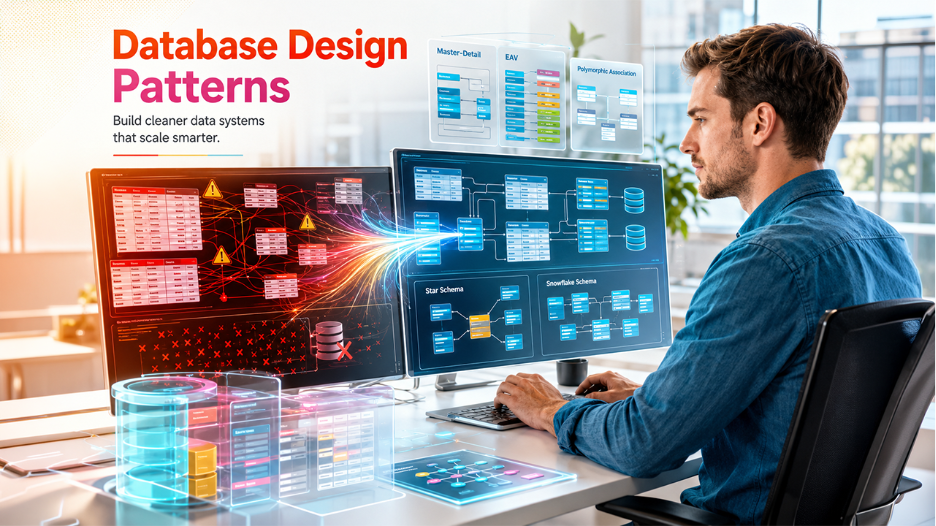

Most database problems are not really data problems; they are design problems. A slow query, a corrupted record, a report that returns different numbers every time; this does not because the data itself is bad. They happen because the structure that is holding the data was not thought through carefully enough. And fixing becomes expensive by the time it becomes obvious. Knowing the right database design pattern from the start is what separates a system that scales cleanly from one that becomes expensive to fix once the damage is obvious.

That is exactly why database design patterns exist. They give you a proven starting point, so you don’t have to guess from scratch every time you build something new much like how best coding challenges for beginners build problem-solving muscle before you tackle real systems.

What Are Database Design Patterns?

A database design pattern is a repeatable solution to a common data modeling problem. It is like an architectural blueprint that decides how tables relate to each other, how data is split or combined, and where the boundaries should be drawn between entities.

These patterns operate at different levels. Some address how to store hierarchical data while others tackle how to track historical changes, handle dynamic attributes, or separate read and write workloads for scale. However, they all share a common purpose which is reducing complexity, improving maintainability, and making systems behave predictably under real-world conditions. There is no single pattern that works for every situation. Most production databases actually use several patterns at once. The real skill is knowing which one fits your problem and understanding the trade-offs before you commit.

Here are seven database design patterns that every developer should understand to build scalable, maintainable, and high-performance data systems in 2026.

7 Database Design Patterns Explained with Examples

Normalized Form Pattern

The Problem: When the same data is stored in multiple places, updating it becomes risky. When you change a customer name in one table and forget another, and now your system has two conflicting versions of the truth. These are known as update anomalies, and they are one of the most common causes of hard-to-trace bugs.

How It Works: Normalization organizes a schema so that every fact is stored exactly once. If something needs to change, it changes in one place only. This is done by applying a progressive set of rules called normal forms.

- 1NF: Every column holds a single, indivisible value. There are no comma-separated lists inside a cell. Every row is uniquely identifiable with the help of a primary key.

- 2NF: Every non-key column depends on the entire primary key rather than just a part of it. If an order_items table is keyed on (order_id, product_id), then product_name relies only on product_id; it belongs in a separate products table.

- 3NF: Non-key columns depend only on the primary key, not on each other. If a customer’s table includes both city and state, and city determines state, then state should move to a separate cities table.

Example: Here is an unnormalized table that mixes customer and product data in a single row:

— Not normalized: customer data repeats for every order

CREATE TABLE orders_raw (

order_id INT,

customer_name VARCHAR(100),

customer_email VARCHAR(255),

product_name VARCHAR(100),

quantity INT

);

After normalization, each fact lives in its own table:

CREATE TABLE customers (

customer_id INT PRIMARY KEY,

customer_name VARCHAR(100),

customer_email VARCHAR(255)

);

CREATE TABLE products (

product_id INT PRIMARY KEY,

product_name VARCHAR(100)

);

CREATE TABLE orders (

order_id INT PRIMARY KEY,

customer_id INT REFERENCES customers(customer_id),

product_id INT REFERENCES products(product_id),

quantity INT

);

Now updating a customer’s email requires changing exactly one row in one table.

When To Use It: Transactional systems such as online banking, ticket booking, e-commerce where the integrity of every insert, update, and delete is non-negotiable.

If you’re working within SQL Server specifically, understanding how SQL Server system admin roles work becomes important as your schema grows.

2. Denormalized Form Pattern

The Problem: Normalization can hurt read performance. When a simple dashboard query requires joining five or six tables just to show a customer’s name next to a sales total, the database works harder than it needs to. At scale, those joins become real bottlenecks.

How It Works: Denormalization deliberately introduces redundancy into a schema. You add derived or replicated columns from related tables directly into a single table, so that common queries can run without expensive joins. It also makes it possible to store a historical snapshot of data at a point in time.

The trade-off is straightforward; you gain read speed and query simplicity, but you must accept the risk of inconsistency if the redundant data is not kept in sync.

Example: In a schema with Sales and Customers tables, both related by customer_id, you can denormalize by adding customer_name directly to the Sales table:

CREATE TABLE sales (

sale_id INT PRIMARY KEY,

customer_id INT,

customer_name VARCHAR(100), — redundant, but fast to query

amount DECIMAL(10,2),

sale_date DATE

);

This gives two benefits at once: faster sales queries that display the customer name without a join, and a historical record of the name the customer had at the time of the sale since names can change over time.

When To Use It: Data warehousing, analytics dashboards, and reporting layers. However, remember to never use it as a replacement for normalization in your core transactional schema; use it as a deliberate, targeted optimization layer on top.

3. Entity-Attribute-Value (EAV) Pattern

The Problem: Relational tables have a rigid structure. Every row must share the same columns. But what to do if your entities have wildly different attributes? A product catalog with thousands of product types cannot realistically define a column for every possible property. Adding a new attribute type means altering the whole table, which in turn affects every row in it.

How It Works: The EAV pattern breaks attributes out into rows instead of columns. You create three core tables: an entity table (the main object), an attribute table (the property names and their metadata), and a value table (the actual property values per entity). This makes the schema flexible enough to handle any number of dynamic attributes without structural change.

Example: A home appliance catalog where vacuum cleaners and ovens have completely different properties:

— EAV table structure

CREATE TABLE product_attributes (

entity_id INT REFERENCES products(id),

attribute VARCHAR(100),

value VARCHAR(255)

);

— Vacuum cleaner attributes

INSERT INTO product_attributes VALUES (1, ‘suction_power’, ‘2400W’);

INSERT INTO product_attributes VALUES (1, ‘capacity’, ‘2.5L’);

— Oven attributes

INSERT INTO product_attributes VALUES (2, ‘cooking_type’, ‘convection’);

INSERT INTO product_attributes VALUES (2, ‘temperature_range’, ’50-300C’);

When To Use It: Situations where the number of attributes varies per entity, attributes are unknown in advance, or new attributes need to be added dynamically. Classic EAV works for systems with strict relational requirements; JSONB is often the more practical choice in modern PostgreSQL environments.

4. Polymorphic Association Pattern

The Problem: On many platforms, a single type of entity like a comment can belong to multiple different parent types. Users comment on articles, photos, and videos, all stored in separate tables. How do you build a single comments table that can reference any one of those parents cleanly?

How It Works: Polymorphic association uses a single foreign key column (commentable_id) plus a type column (commentable_type) to point to rows in different tables depending on the type value. This keeps the schema lean and avoids duplicating the comments table for every parent type.

Example:

CREATE TABLE comments (

id SERIAL PRIMARY KEY,

body TEXT,

commentable_id INT, — points to posts, photos, or videos

commentable_type VARCHAR(50) — ‘Post’, ‘Photo’, or ‘Video’

);

The problem with commentable_id is that it points to different tables depending on the type, so the database cannot enforce a foreign key constraint. This means no cascade deletes, integrity checks and orphaned records can build up silently over time. The fix separate tables per type to keep data clean, but it is too much work.

CREATE TABLE post_comments (

id SERIAL PRIMARY KEY,

body TEXT,

post_id INT REFERENCES posts(id) ON DELETE CASCADE

);

CREATE TABLE photo_comments (

id SERIAL PRIMARY KEY,

body TEXT,

photo_id INT REFERENCES photos(id) ON DELETE CASCADE

);

When To Use It: When schema flexibility is the priority and application-level integrity enforcement is acceptable. For those systems that need long-term data integrity enforced at the database level, separate tables per type are almost always the better trade-off.

5. Master-Detail Pattern

The Problem: Many real-world relationships involve a parent entity with a variable number of child records. An invoice can have one or many line items. A customer can have one or many branches. A standard flat table cannot capture this “one parent, many children” relationship naturally.

How It Works: The Master-Detail pattern uses two entities linked by a 1:N relationship. The child entity (Detail) is dependent on the parent (Master); the primary key of the parent becomes part of the primary key of the child. This creates a clear ownership structure where each child record belongs to exactly one parent.

Example: An ERP application managing customers and their branches:

CREATE TABLE customer (

customer_id INT PRIMARY KEY,

customer_name VARCHAR(100),

tax_id VARCHAR(50)

);

CREATE TABLE branch (

customer_id INT REFERENCES customer(customer_id),

branch_no INT,

address VARCHAR(255),

telephone VARCHAR(20),

PRIMARY KEY (customer_id, branch_no)

);

Each row is identified by the combination of its customer_id and its own branch_no. You cannot have a branch without a customer; the relationship is enforced at the schema level.

When To Use It: Anywhere you have a parent entity with a variable number of related child records such as orders and order items, invoices and line items, projects and tasks. It is one of the most common patterns in transactional applications.

6. Star Schema Pattern

The Problem: Analytical queries that power dashboards, BI reports, and business summaries are fundamentally different from transactional queries. They aggregate large volumes of data across multiple dimensions (time, region, product, channel) and need to return results fast. Normalized schema is not built for this.

How It Works: The Star schema places a central fact table at its core. The fact table holds measurable data like revenue, quantity sold, number of events along with foreign keys that point to surrounding dimension tables. Dimension tables are denormalized and describe the context of each fact: what product, which region, what time period. Each query is essentially a join from the fact table to one or more-dimension tables.

The model is called “star” because the ERD with the fact table at the center and dimension tables that go outward literally resemble a star.

Example structure:

— Central fact table

CREATE TABLE sales_fact (

sale_id INT PRIMARY KEY,

amount DECIMAL(10,2),

quantity INT,

date_id INT REFERENCES dim_date(date_id),

product_id INT REFERENCES dim_product(product_id),

region_id INT REFERENCES dim_region(region_id)

);

— Dimension table example

CREATE TABLE dim_product (

product_id INT PRIMARY KEY,

product_name VARCHAR(100),

product_family VARCHAR(50)

);

When To Use It: Data warehousing and business intelligence. It is the default choice for most analytical use cases because it is easy to understand, simple to query, and fast to execute.

7. Snowflake Schema Pattern

The Problem: Star schema dimension tables are intentionally denormalized. They store everything about a dimension in one flat table. But some dimensions are genuinely complex. A location dimension might need to capture a neighborhood, city, county, state, and country. Flattening that into one table creates redundancy and makes the dimension harder to maintain.

How It Works: The Snowflake schema normalizes the dimension tables. Each dimension is expressed as its own subschema, where the main dimension table is supported by a set of lookup tables representing its hierarchy. The result looks like a snowflake: a central fact table, dimension tables branching out from it, and further lookup tables branching out from those.

Example: A location dimension broken into its natural hierarchy:

CREATE TABLE dim_country (

country_id INT PRIMARY KEY,

country_name VARCHAR(100)

);

CREATE TABLE dim_state (

state_id INT PRIMARY KEY,

state_name VARCHAR(100),

country_id INT REFERENCES dim_country(country_id)

);

CREATE TABLE dim_city (

city_id INT PRIMARY KEY,

city_name VARCHAR(100),

state_id INT REFERENCES dim_state(state_id)

);

Star vs Snowflake at a Glance

| Schema | Snowflake Schema | |

| Dimension tables | Denormalized, single table | Normalized into sub-tables |

| Query joins | Fewer | More |

| Storage | Higher | Lower |

| Query speed | Generally faster | Slightly slower |

| Best for | Dashboards, ad-hoc, BI | Complex hierarchies, storage-sensitive environments |

When To Use It: When your dimensions have complex hierarchies that cannot be cleanly expressed in a single flat table, or when storage efficiency and data integrity within dimensions are a priority. Star schema is simpler whereas snowflake is more rigorous.

Conclusion

No database stays simple forever. As your data grows, your queries get more complex, and your team expands. The structural decisions you made early start to matter more and more. That is what makes database design patterns so valuable.

The right pattern is not the most sophisticated one. It is the one that fits your actual problem. Normalization keeps your transactional data clean. Denormalization makes your reads fast. EAV handles dynamic attributes without schema chaos. Star and snowflake schemas make analytics fast and manageable. Master-detail models parent-child relationships cleanly. Polymorphic associations give you flexibility at a cost you should understand before accepting.

Use these patterns as a starting vocabulary, not a rigid checklist. Most real systems combine several of them and that is perfectly fine, as long as each choice is deliberate

Share on media

Share on media

%201.png)

%201.png)

%201.png)